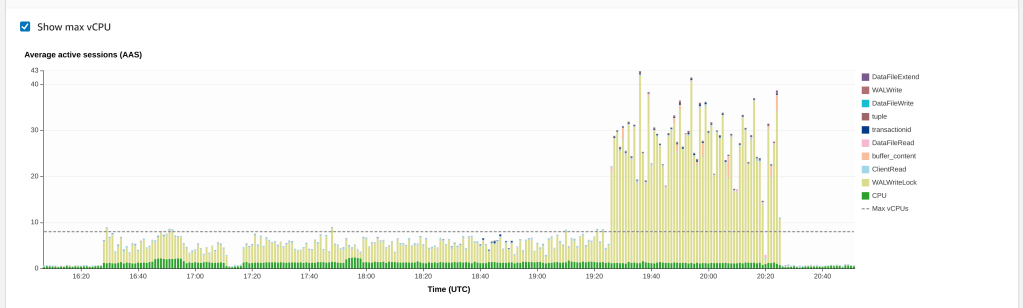

We recently had a service degradation/outage, which manifested as WalWriteLock in perf insights:

Our short term solution was to raise the av threshold, and kill the process. But it had to run again, at some point, or we’d be in real trouble.

We checked the usual suspects for av slowdown, but couldn’t find any transaction older than the av process itself, or any abandoned replication slots.

We don’t currently have a replica (although we are using multi-AZ); but this prompted us to realise that we still had the wal_level set to logical, after using DMS to upgrade from pg 10 to 11. This generates considerably more WAL, than the next level down.

After turning that off (and failing over), we triggered AV again, but were still seeing high WalWriteLock contention. Eventually, we found this 10 year old breadcrumb on the pg-admin mailiing list:

Is it vacuuming a table which was bulk loaded at some time in the past? If so, this can happen any time later (usually during busy periods when many transactions numbers are being assigned)

https://www.postgresql.org/message-id/4DBFF5AE020000250003D1D9%40gw.wicourts.gov

So it seems like this was a little treat left for us by DMS, which combined with the extra WAL from logical, was enough to push us over the edge at a busy time.

Once that particular AV had managed to complete, the next one was back to normal.

The experience can be avoided, by running VACUUM FREEZE after the data migration, and before failing over to the new database.