We use pgbouncer as a connection pooler, and in one of our production enviroments (after a recent migration) we were getting some portion of connection attempts failing with ETIMEDOUT.

Our first assumption was that it was due to some limitation of our service provider’s internal network, but they assured us that they couldn’t see any failures; and when we looked at the other end, we couldn’t either.

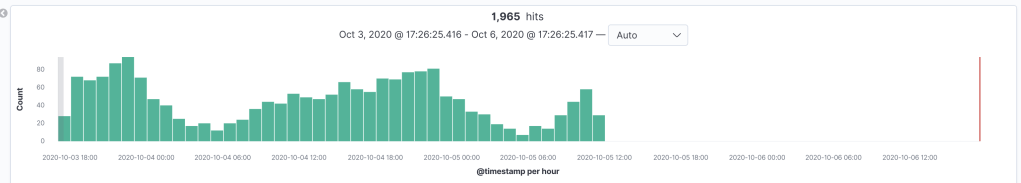

So it seemed to be some limitation on the client host (e.g. hitting the file descriptor limit). We had a look at some netstat data, and added some datadog tcp metrics, but nothing stood out.

At this point, there seemed to be no other option than to use tcpdump and see if we could find a reason that the connection was rejected. We fired it up:

sudo tcpdump -i eth2 -w tcpdump.log

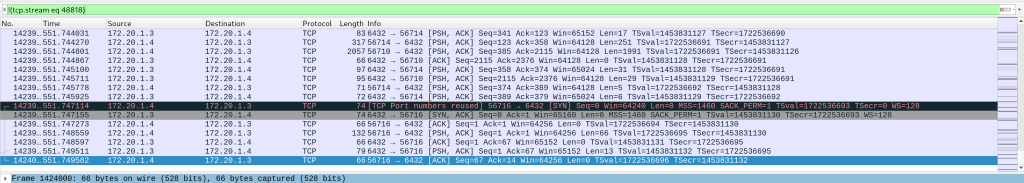

downloaded the output, and opened it up in wireshark. Following some helpful instructions we identified some likely packets.

At this point it was starting to look like we were suffering from ephemeral port exhaustion, so we decided to experiment with running pgbouncer on the app server instead, as that would reduce the number of open sockets between the hosts.

A resounding success! I’m sure it would also be possible to tune some linux tcp options, to the same effect, but this was acceptable for us (there’s only one app server in that env).

I’m not entirely sure why we were getting a time out, rather than EADDRNOTAVAIL, but that may be due to the client library we are using to connect.